The Supplier Test Order Validation Framework: Measuring Quality, Speed & Red Flags Before Your First Sale

A supplier's first order is a job interview where the candidate knows they're being watched. The packaging will be tighter, the communication faster, the quality closer to the sample than it will ever be again.

The Supplier Test Order Validation Framework: 6 Rules for Measuring Quality, Speed, and Red Flags Before Your First Sale

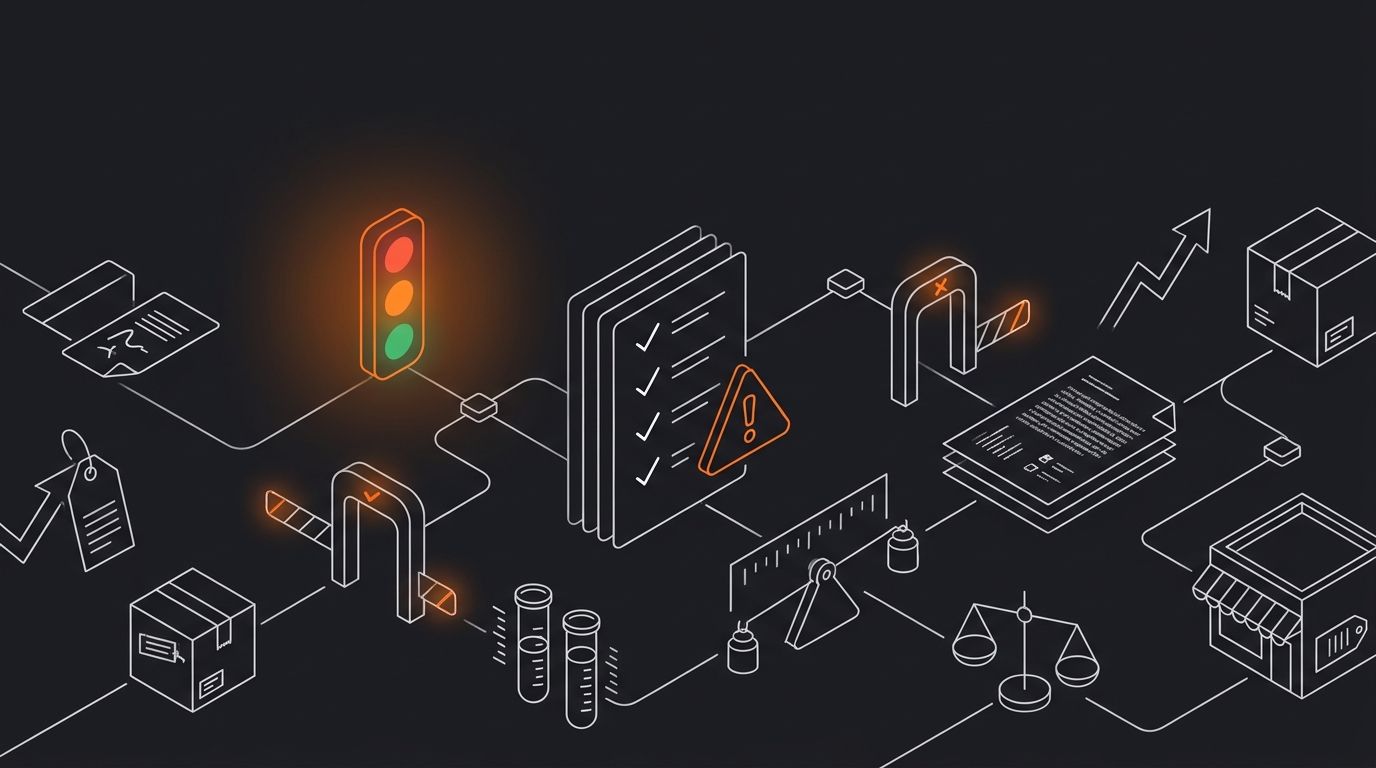

A supplier's first order is a job interview where the candidate knows they're being watched. The packaging will be tighter, the communication faster, the quality closer to the sample than it will ever be again. So if a supplier scores poorly during this window, you're looking at a ceiling, not a floor. Structured test order metrics exist precisely for this moment: to give you a numeric, repeatable way to decide whether a supplier earns a second order or gets cut. Below are six rules that form a working supplier validation checklist, each built around a specific measurement you can run before committing real ad spend.

Score every test order on a 100-point scale

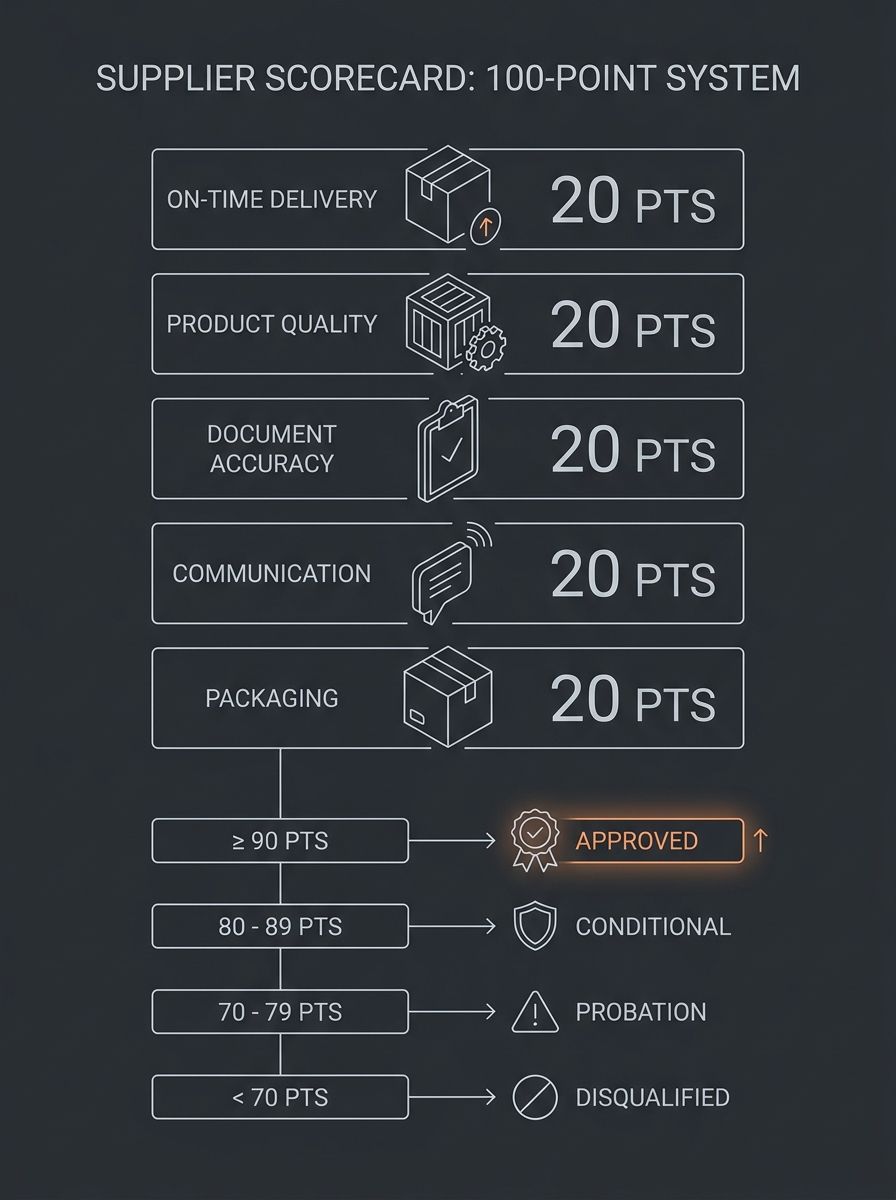

Gut feelings are expensive. A scored rubric forces you to compare suppliers on the same axis and makes the "go/no-go" decision defensible. The framework gaining traction in sourcing circles breaks scoring into five categories, each worth 20 points: on-time delivery, product quality versus approved sample, document accuracy, communication responsiveness, and packaging/labeling compliance.

Here's how the thresholds work in practice:

80–100 points: Approved vendor. Reorder with confidence.

60–79 points: Conditional. One more trial order with a written remediation plan.

40–59 points: Probation. A single small order only, with close monitoring.

Below 40 points: Disqualified. Find someone else.

A score below 60 on a first order, when the supplier is on their best behavior, is a yellow flag that rarely improves with volume. If you're early in your supplier vetting process, this scoring rubric gives you a decision framework that replaces hope with data. We covered what to actually measure during test orders in more detail previously, but the scoring system above is the backbone.

Measure delivery variance in days, not promises

Suppliers quote lead times. What matters is the gap between the quoted date and the actual arrival date. The On-Time In-Full (OTIF) metric captures both: did the order arrive within the promised window, and was it complete? According to ClicData's supplier quality benchmarks, poor OTIF performance feeds directly into stockouts and customer dissatisfaction.

For a dropshipping operation, delivery variance has a direct CAC impact. Every day a package is late increases the probability of a "Where is my order?" ticket, a chargeback, or a negative review. You're the one left dealing with the flood of angry messages, as ecommerce operators on Reddit have pointed out repeatedly.

Benchmarks vary by supplier origin. Chinese suppliers (MOQ 50–500 units) typically show ±5–10 days of lead time variance with average defect rates of 1.5–3%. Mexican nearshore suppliers (MOQ 20–100 units) tend to land at ±2–5 days with defect rates of 0.5–1.5%. Indian SME suppliers fall somewhere in between: ±7–14 days variance and 2–4% defects.

When you record your test order, log three dates: the date the supplier confirmed the shipment, the date tracking shows first scan, and the date the package physically arrives. That three-date log, across even two or three test orders, tells you whether the supplier pads their estimates or genuinely ships on schedule.

This rule breaks when you're testing a supplier during known disruption periods (Chinese New Year, monsoon season, port congestion events). Adjust your expectations with context, but still record the variance. If you're navigating how supply chain disputes hit your margins, understanding normal versus abnormal variance for each supplier becomes critical.

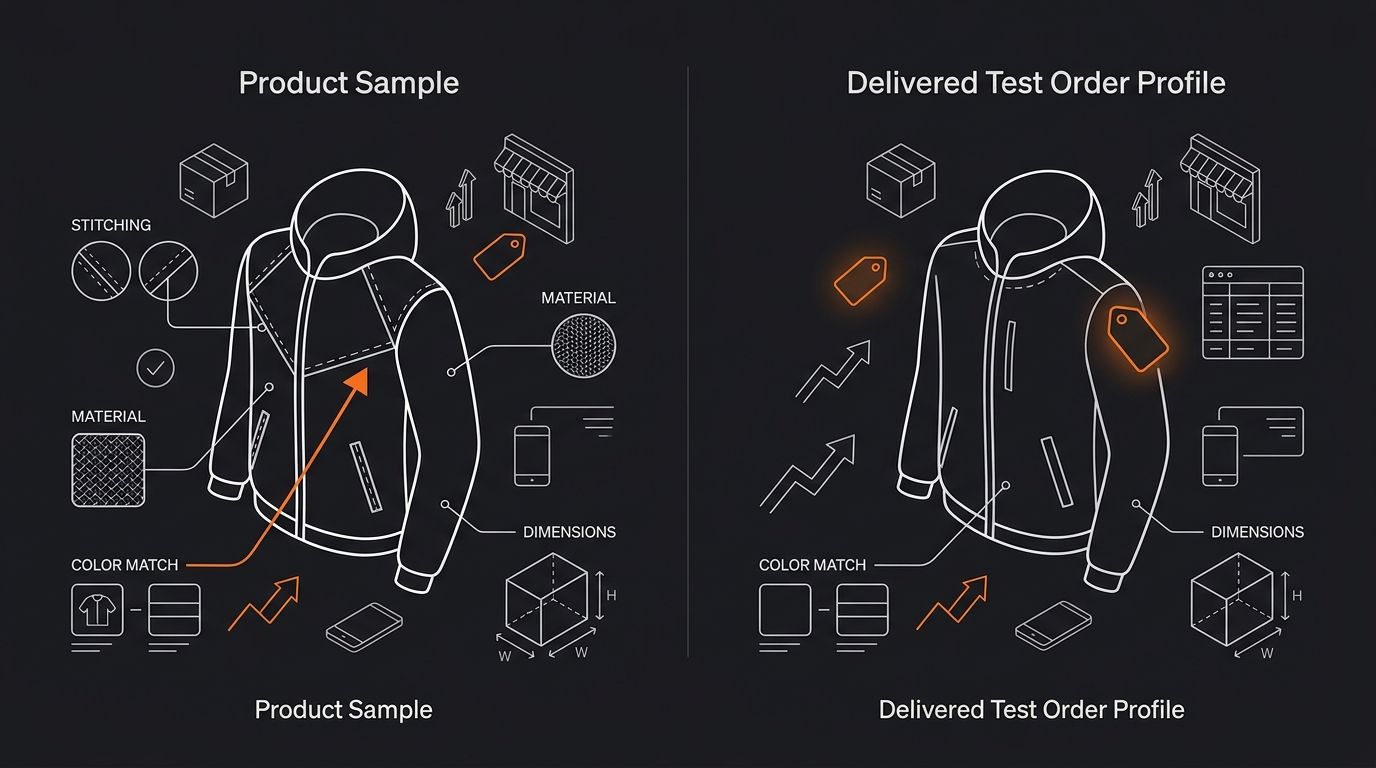

Compare the delivered product against your approved sample, item by item

Requesting a sample and then eyeballing it when it arrives isn't quality control dropshipping. It's wishful thinking. You need a structured comparison: photograph the sample when it arrives, then photograph the test order product under the same lighting, from the same angles. Compare material feel, stitching, color accuracy, weight, and dimensions.

The defect rate metric matters here. Veridion's supplier performance research defines incident frequency as the number of defective or non-conforming items divided by total items, multiplied by 100. A high incident frequency on a test order is a red flag, full stop. If 3 out of 50 units in a test batch have visible defects (6% defect rate), that supplier scores a zero on the quality portion of your 100-point rubric.

Watch for "too perfect" quality documentation as well. Falsified test reports often show suspiciously uniform data, with measurements that never vary and results that look copy-pasted across batches. Real manufacturing processes produce slight variations. Perfectly round numbers on every line of a quality report deserve skepticism.

Track communication speed when something goes wrong

Responsive communication during the quoting phase means almost nothing. Every supplier is attentive when they're trying to win your business. The real test comes when you report a problem: a delayed shipment, a documentation error, a quality discrepancy.

Build a deliberate friction point into your test order. Ask for a packaging change mid-order, request updated tracking after a delay, or flag a minor quality concern and measure how long the response takes. Consistent replies under 24 hours earn full marks. Silence during a problem, or responses that dodge the question, score zero.

This measurement matters disproportionately for dropshippers because you don't hold inventory. When a customer's package is stuck in transit, you're relying entirely on the supplier's responsiveness to give you accurate information. If getting a straight answer takes 72 hours during a test order, imagine what happens during peak season when that supplier is juggling 200 other clients.

Suppliers who demonstrate clear, candid, and timely dialogue during the test phase tend to maintain that standard under load. Suppliers who go quiet when pressed tend to get worse.

Verify the supplier's identity before you send money

Before you place a test order, confirm the supplier actually exists as a registered business. This step belongs in every pre-scaling supplier assessment, but an alarming number of dropshippers skip it. Run a WHOIS lookup on the supplier's domain. A domain registered within the last six months, with privacy-masked ownership, deserves extra scrutiny. Cross-reference the business address with Google Maps satellite view. Check for active trade records, business licenses, and a pattern of real customer reviews.

No online presence or a pattern of negative feedback is a clear warning sign. And if a supplier who claims to be a manufacturer has zero presence on platforms like Alibaba, 1688, or Global Sources, you should ask why.

Payment protection matters here too. Use escrow or platform-protected payments (Trade Assurance on Alibaba, PayPal for small orders) so you have recourse if the test order never arrives. Never wire money directly to a supplier you haven't verified through at least two independent sources. If you're weighing direct supplier relationships versus platform middlemen, the verification burden shifts depending on which route you choose, but it never disappears.

Also worth noting: Nacha's 2026 ACH rule changes now require pre-payment account validation before sending the first ACH credit to a supplier, as PaymentWorks has documented. Supplier verification is moving from best practice to regulatory requirement.

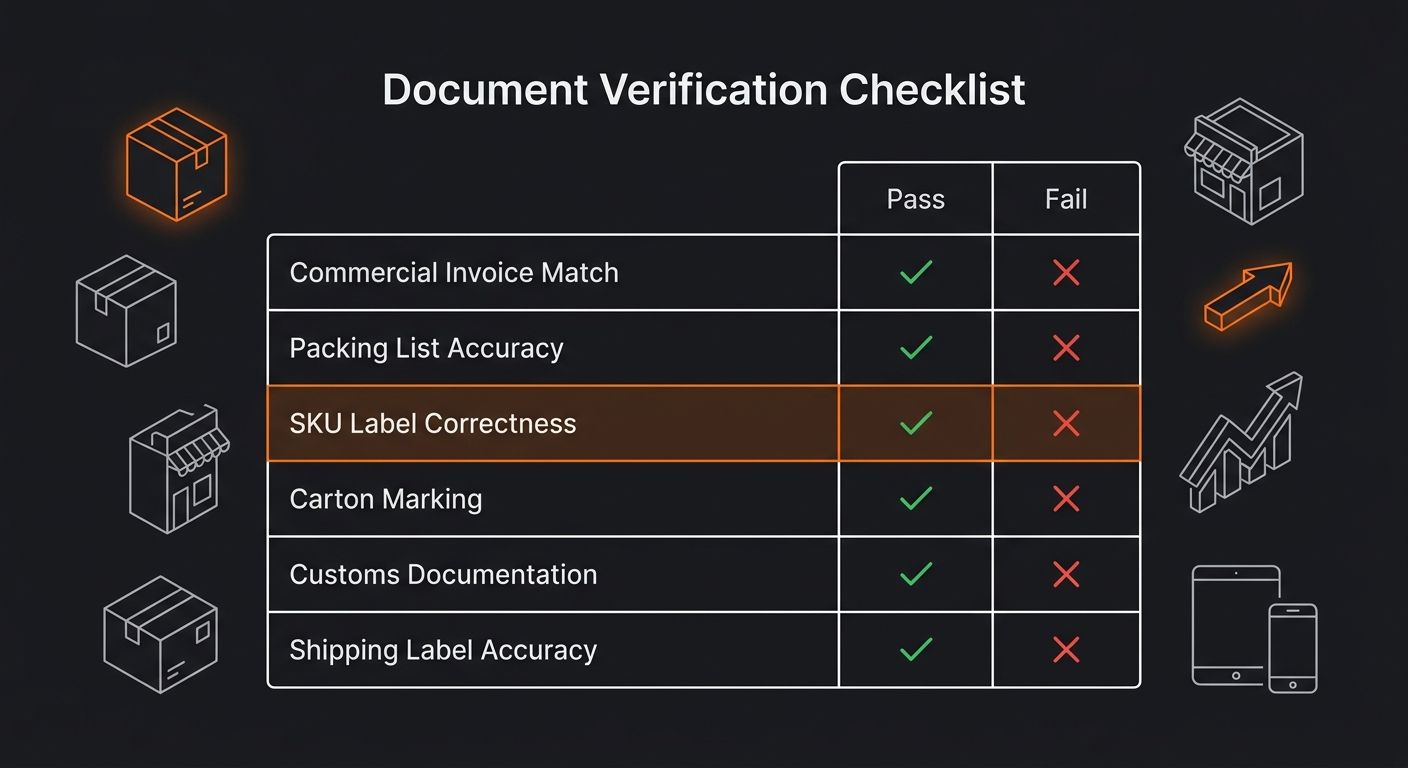

Treat document errors as disqualifying failures

This is the most overlooked category in the supplier validation checklist, and it's the one that causes the most expensive problems at scale. When a supplier's commercial invoice doesn't match the packing list, when SKU labels are wrong, when carton marks are missing or incorrect, you're looking at customs delays, misrouted inventory, and manual reconciliation that eats hours.

On a test order, document accuracy is binary. Either the invoice matches the packing list on first submission, or it doesn't. Either the SKU labels are correct, or they require reprinting. Suppliers who score below 15 out of 20 on this category during a test order will generate ongoing operational friction that compounds when automation tools try to sync their data.

Chinese suppliers hit 80–90% document accuracy on first pass. Mexican nearshore suppliers reach 90–98%. If your test order comes back with customs holds caused by invoice errors, that's a zero on this category and a strong argument for disqualification regardless of how the other four categories scored.

When these rules break down

These six rules assume you can afford to walk away from a supplier who fails. That assumption breaks in a few scenarios. If you're sourcing a product from a sole manufacturer with no alternatives, your scoring threshold drops and your negotiation strategy changes. You fix problems rather than disqualify.

The rules also bend for suppliers in emerging categories (custom formulations, handmade goods, regional specialties) where lead time variance and defect rates naturally run higher than mass-manufactured products. Adjust your benchmarks to the category, but still score. The number itself is what lets you track improvement over time.

And if you're brand new to the space, working through a beginner's guide to dropshipping operations before running test orders will give you the operational context to interpret these scores correctly. A 75-point supplier looks different when you understand your own fulfillment workflow versus when you're guessing at it.

The framework holds its value because it replaces subjective judgment with repeatable measurement. Run it once and you have a data point. Run it across three suppliers for the same product and you have a comparison that pays for itself in avoided refunds, avoided chargebacks, and avoided late-night customer service sessions. The suppliers who score well on test orders tend to be the ones who don't blow up your margins six months later.

365 Dropship Editorial

Editorial team writing about E-commerce, dropshipping, and product discovery — reviews of dropshipping suppliers and platforms, trending niche guides (jewelry, beauty, pets, home, fashion), supplier due diligence, ecom operations, shipping & fulfillment strategy, product research, AOV optimization, and profitable dropshipping case studies.

Explore more topics