The Supplier Test Order Playbook: What to Actually Measure Before Scaling

A fulfillment center shipping 10,000 orders with a 99.6% accuracy rate still sends 40 wrong packages. At a $45 AOV with a $12 margin per order, those 40 errors cost roughly $480 in refunds and reshipping before you count the chargebacks and one-star reviews.

The Supplier Test Order Playbook: What to Actually Measure Before Scaling

A fulfillment center shipping 10,000 orders with a 99.6% accuracy rate still sends 40 wrong packages. At a $45 AOV with a $12 margin per order, those 40 errors cost roughly $480 in refunds and reshipping before you count the chargebacks and one-star reviews. The standard dropshipping supplier evaluation process—place one order, wait for it to show up, eyeball the product—produces almost no usable signal about whether a supplier can handle real volume. A structured test order protocol is a measurement instrument with specific metrics, benchmarks, and pass/fail thresholds. Treating it like a vibe check is how operators end up scaling into a fulfillment disaster.

What a Test Order Actually Measures (and What It Doesn't)

The mechanism is straightforward: you place multiple orders to a single supplier under controlled conditions, then record hard numbers against a scorecard. The goal isn't to confirm "yeah, the product exists." It's to stress-test five specific dimensions of the supplier relationship before you commit ad spend.

Those five dimensions:

Order accuracy — did the right item, variant, and quantity arrive?

Packaging quality — would this survive customer unboxing expectations?

Shipping speed — how many calendar days from order placement to doorstep?

Communication responsiveness — how fast and how coherently does the supplier reply?

Defect and damage rate — across multiple units, what percentage has issues?

A single test order can't produce statistically meaningful data on defect rates. You need a minimum of 5–10 units, ideally split across variants (different colors, sizes, SKUs) to surface picking errors and QC inconsistencies. According to supplier quality management best practices, trial production runs and sample testing are standard steps to verify product quality before scaling procurement.

Order Accuracy and Packaging: The First 30 Seconds

Order accuracy rate measures how often customers receive the correct items, quantities, and packaging. Industry benchmarks put a strong fulfillment partner at 99.5% or above. For your test orders, the math is binary: if you order 10 units and even 1 arrives wrong, that's a 90% accuracy rate. Walk away.

Here's what to document for each test order:

Correct SKU/variant? Check color, size, model number against what you ordered.

Quantity accurate? Sounds obvious. Suppliers occasionally short-ship or double-ship.

Invoice/packing slip included? If your supplier includes their own branding or pricing, that's a dealbreaker for white-label operations.

Packaging condition on arrival. Was the outer box crushed? Was there bubble wrap or foam? Did poly mailers have adequate sealing?

The packaging piece matters more than new operators expect. If you're selling $35+ jewelry or beauty products, a crumpled poly mailer with no branded insert tells your customer everything about the experience they can expect going forward. We've covered the importance of working with real suppliers over AliExpress connectors before, and packaging quality is one of the biggest reasons the distinction matters.

Score each order on a 1–5 scale for accuracy and a 1–5 for packaging presentation. Average across all test units.

Shipping Speed: The Numbers That Actually Matter

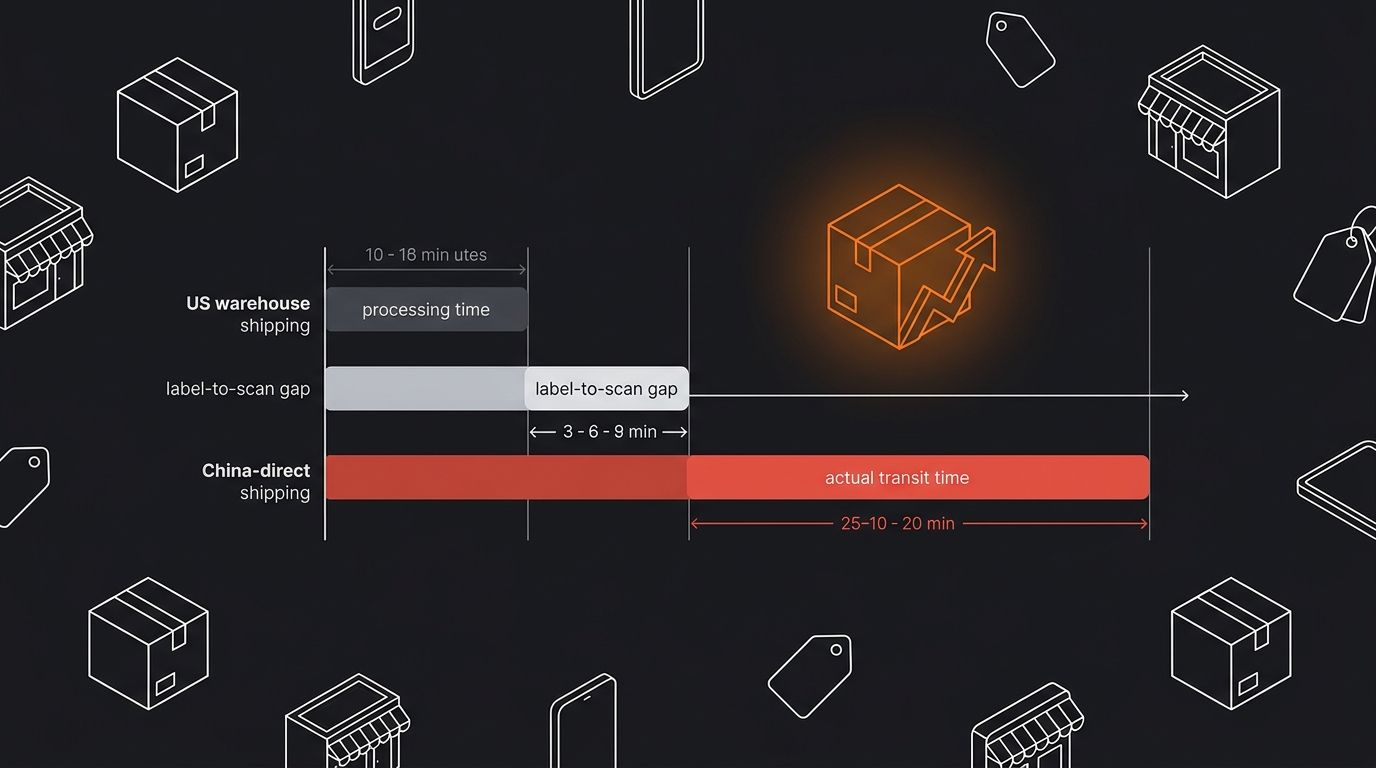

Forget the supplier's listed shipping estimate. Your test order protocol needs to capture three distinct timestamps:

Order placement to tracking number issued. This is processing time. Acceptable for US/EU warehouse suppliers: 1–2 business days. For China-direct, 2–4 business days.

Tracking number issued to first carrier scan. This gap reveals whether the supplier prints labels before actually shipping. If there's a 3+ day gap between label creation and first scan, they're gaming their fulfillment metrics.

First carrier scan to delivery. Actual transit. This is largely carrier-dependent, but the supplier chose the carrier.

Add all three together. That's your real fulfillment window. For US customers ordering from a US warehouse supplier, you want this total under 7 calendar days. For China-direct via ePacket or Yanwen, expect 12–20 days. Anything above 20 days and you're looking at chargeback territory.

If you're still evaluating which platforms and suppliers to work with, the breakdown of top dropshipping platforms includes warehouse location data and average shipping windows for each option.

Communication Responsiveness Under Simulated Pressure

This is the test order metric operators skip entirely. Send your supplier 3–4 messages during the test order period, each simulating a real scenario:

A tracking inquiry ("My customer is asking where their order is, can you provide an update?")

A modification request ("Can we change the shipping address on order #X before it ships?")

A product question ("Customer is asking if the product contains a specific material. Can you confirm?")

A problem escalation ("This order arrived with damage. What's your process for replacement?")

Record response time in hours for each message, and score the quality of the response. Did they actually answer the question, or paste a generic template?

Benchmarks: Under 12 hours is good. Under 4 hours during business hours is excellent. Over 24 hours is a red flag. Over 48 hours is a disqualifier.

Pay close attention to language quality too. If you're selling to English-speaking customers and the supplier's responses are difficult to parse, that communication gap will compound at scale when you're handling 20+ support tickets per day that require supplier coordination. This ties directly into how inventory sync failures and supplier miscommunication cascade into overselling crises.

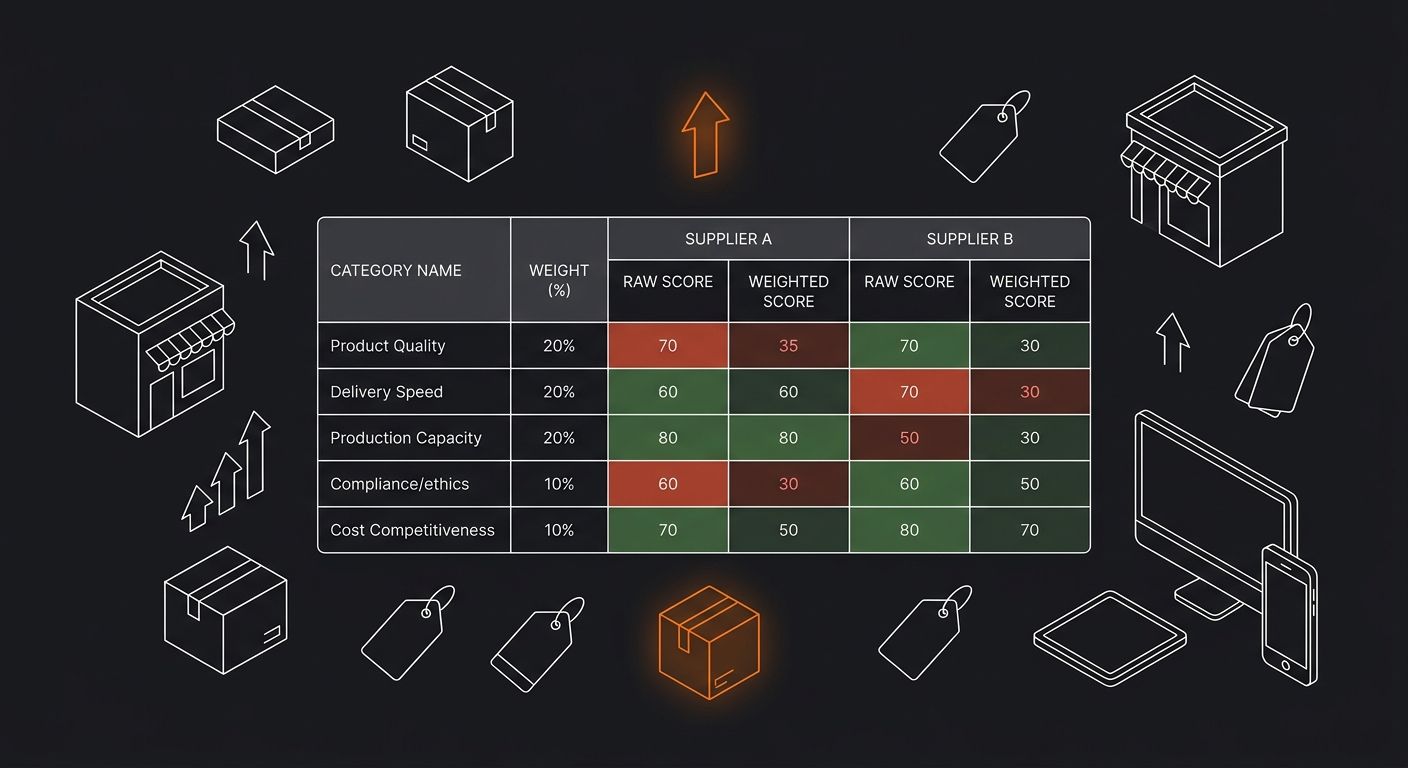

Building the Scorecard

A supplier scorecard is a systematic way to evaluate candidates on standardized criteria using a numeric rating system. Here's a stripped-down version that works for test order evaluation:

Category — Weight — Score (1–5)

Order Accuracy: 25% weight

Packaging Quality: 15% weight

Total Shipping Days: 25% weight

Communication Speed: 15% weight

Communication Quality: 10% weight

Defect/Damage Rate: 10% weight

Multiply each score (1–5) by its weight, then sum the results. Maximum possible: 5.0. Your threshold for scaling should be 3.8 or above. Below 3.5, walk away. Between 3.5 and 3.8, retest with another batch before committing.

A real example: Supplier A scores 5 on accuracy, 3 on packaging, 4 on shipping speed, 4 on communication speed, 3 on communication quality, 4 on defect rate.

Weighted score: (5 × 0.25) + (3 × 0.15) + (4 × 0.25) + (4 × 0.15) + (3 × 0.10) + (4 × 0.10) = 1.25 + 0.45 + 1.00 + 0.60 + 0.30 + 0.40 = 4.0

That's a passing supplier. Scaling is reasonable. But note the packaging weakness: if you're in a niche where unboxing matters (jewelry, beauty, premium home goods), you'd weight packaging at 25% instead of 15%, and that same score drops below 3.8. Adjust weights based on your specific business model. A COD-heavy store using cash-on-delivery flows might weight shipping speed even higher because late deliveries lead directly to RTO (return to origin) losses.

The Volume Ceiling Nobody Tests For

Beyond individual order quality, you need to understand whether a supplier can handle your projected volume. Evaluating current capacity against future demand is standard procurement practice, but dropshippers rarely do it.

Ask your supplier directly:

What's your maximum daily order capacity?

What happens during peak season (Q4, BFCM)? Do processing times increase?

Do you hold safety stock, or do you produce/source to order?

If I need to scale from 20 orders/day to 100 orders/day over 30 days, can you handle that ramp?

The answers tell you whether you'll hit a fulfillment ceiling right when ad spend starts producing results. And vague answers like "we can handle any volume" are worse than honest limitations, because they signal a supplier who hasn't actually thought about capacity planning.

If you're still in the early stages of figuring out how all these pieces connect, the beginner's operational guide walks through how supplier selection fits into the broader store-building sequence.

Where This Framework Falls Apart

This test order protocol has real limitations, and pretending otherwise would waste your time.

Sample size is still small. Ten test units don't capture seasonal variation, staff turnover at the supplier's warehouse, or the quality drift that happens when a supplier takes on too many clients. Your scorecard gives you a snapshot, not a guarantee. Continuous monitoring after scaling, tracking the same metrics on a monthly cadence, is the only way to catch degradation before it tanks your margins.

Suppliers know they're being tested. Some suppliers prioritize known test orders with faster processing and better packaging. The real performance shows up at order 500, not order 5. One partial mitigation: don't identify yourself as a new client evaluating them. Order through your store's normal checkout flow if possible.

Communication tests are artificial. Sending simulated problem messages gives you a baseline, but real crisis communication under volume pressure looks different. A supplier who replies thoughtfully to your test inquiry might send one-line dismissals when they're processing 2,000 orders on a Monday morning.

The scorecard doesn't capture financial risk. A supplier might score 4.5 on fulfillment quality assessment but have shaky financial stability, a single-source dependency on one factory, or no backup logistics chain. Those risks don't appear in any test order.

So the framework gives you a strong initial filter. It eliminates the obviously bad suppliers and surfaces specific weaknesses in otherwise promising ones. What it can't replace is ongoing performance tracking once real orders start flowing. Set a calendar reminder to re-run your scorecard assessment at 30, 90, and 180 days post-launch. The supplier who scored a 4.2 in testing might be sitting at a 3.1 three months later, and catching that drift early is what separates operators who scale profitably from those who discover the problem through a wave of chargebacks and angry customer emails.

365 Dropship Editorial

Editorial team writing about E-commerce, dropshipping, and product discovery — reviews of dropshipping suppliers and platforms, trending niche guides (jewelry, beauty, pets, home, fashion), supplier due diligence, ecom operations, shipping & fulfillment strategy, product research, AOV optimization, and profitable dropshipping case studies.

Explore more topics