The Supplier Test Order Playbook: What to Measure Beyond Shipping Speed

Three-to-five business days from Zendrop. Seven-to-twelve from a CJ China warehouse. Two-to-four from a Spocket US-based partner. Every test order debrief you've read starts and ends with transit time, and that focus is actively misleading.

The Supplier Test Order Playbook: What to Measure Beyond Shipping Speed

Three-to-five business days from Zendrop. Seven-to-twelve from a CJ China warehouse. Two-to-four from a Spocket US-based partner. Every test order debrief you've read starts and ends with transit time, and that focus is actively misleading. Shipping speed is the single least predictive metric for whether a supplier will protect your margins once you're processing 50+ orders a day. The mechanism that actually predicts supplier reliability is a structured test order validation process that scores packaging, accuracy, communication cadence, defect rates, and backend data sync before you spend a dollar on ads.

This article breaks down each layer of that mechanism so you know exactly what to record, what thresholds to set, and where the whole model still has blind spots.

Order Accuracy and SKU Matching

Your test order arrives on day four. You rip the package open, confirm the product looks right, and check "pass." That's the version of pre-launch supplier testing that gets people burned.

Actual order accuracy testing means placing an order for a specific variant (size medium, color navy, SKU #NV-M-2024) and confirming the supplier fulfilled that exact variant. If you sell a product with three sizes and four colors, that's twelve possible SKUs. Many AliExpress and CJ suppliers pull from shared warehouse bins where navy and black look identical under fluorescent light. A 2% SKU mismatch rate at 100 orders/day means two customers per day receive the wrong item. At a $12 average product cost and a $6 return shipping label, you're leaking $36/day in direct costs before you count the refund itself, the lost customer, or the Stripe dispute fee.

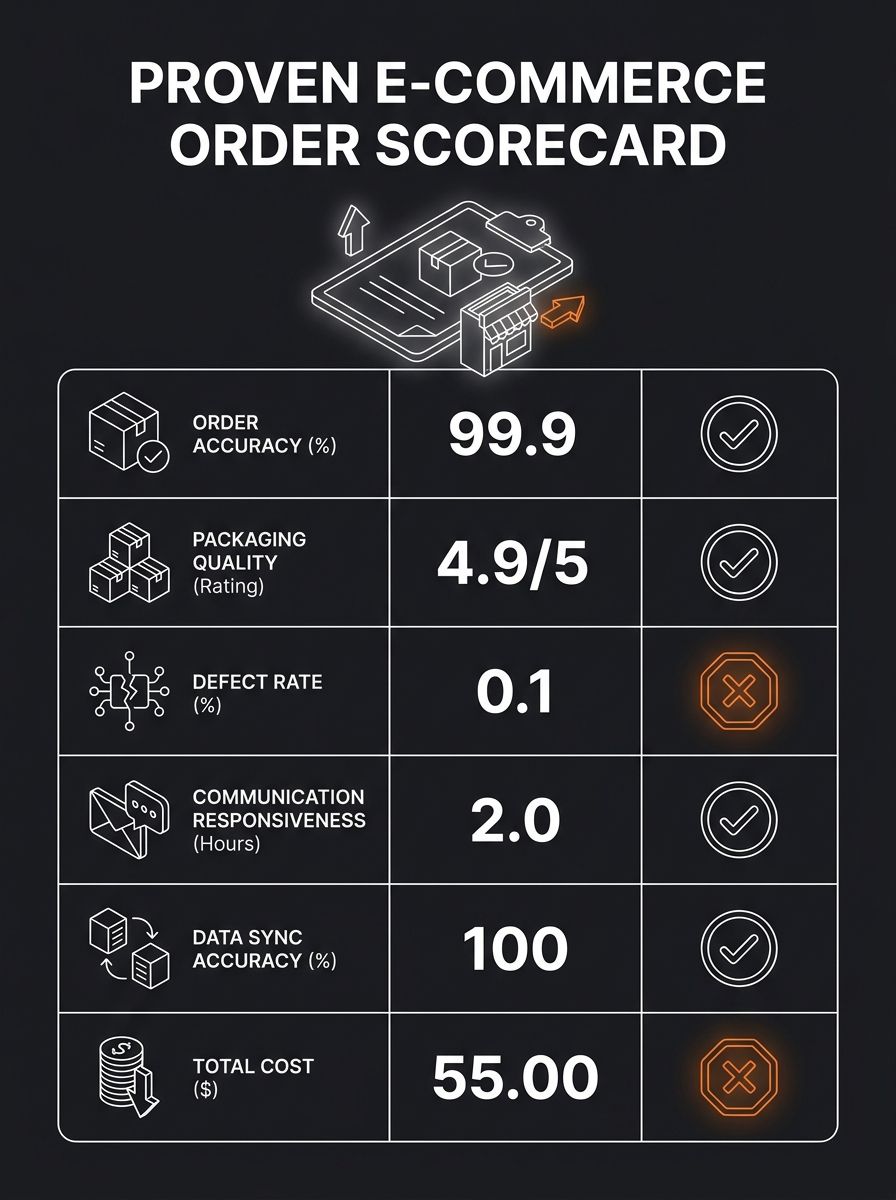

Here's how to test it properly: place three to five test orders, each with a different variant. Record whether the delivered product matches the ordered SKU down to color code and sizing. According to Carro's supplier quality framework, the core metrics for supplier quality assessment include defect rate, on-time delivery rate, and return or rejection rate. Order accuracy feeds directly into all three.

Your target: 100% accuracy across test orders. Anything less than that on a sample of five means you're dealing with a warehouse that doesn't have tight pick-and-pack controls, and the problem will get worse at volume, not better.

Packaging Quality and Unboxing Presentation

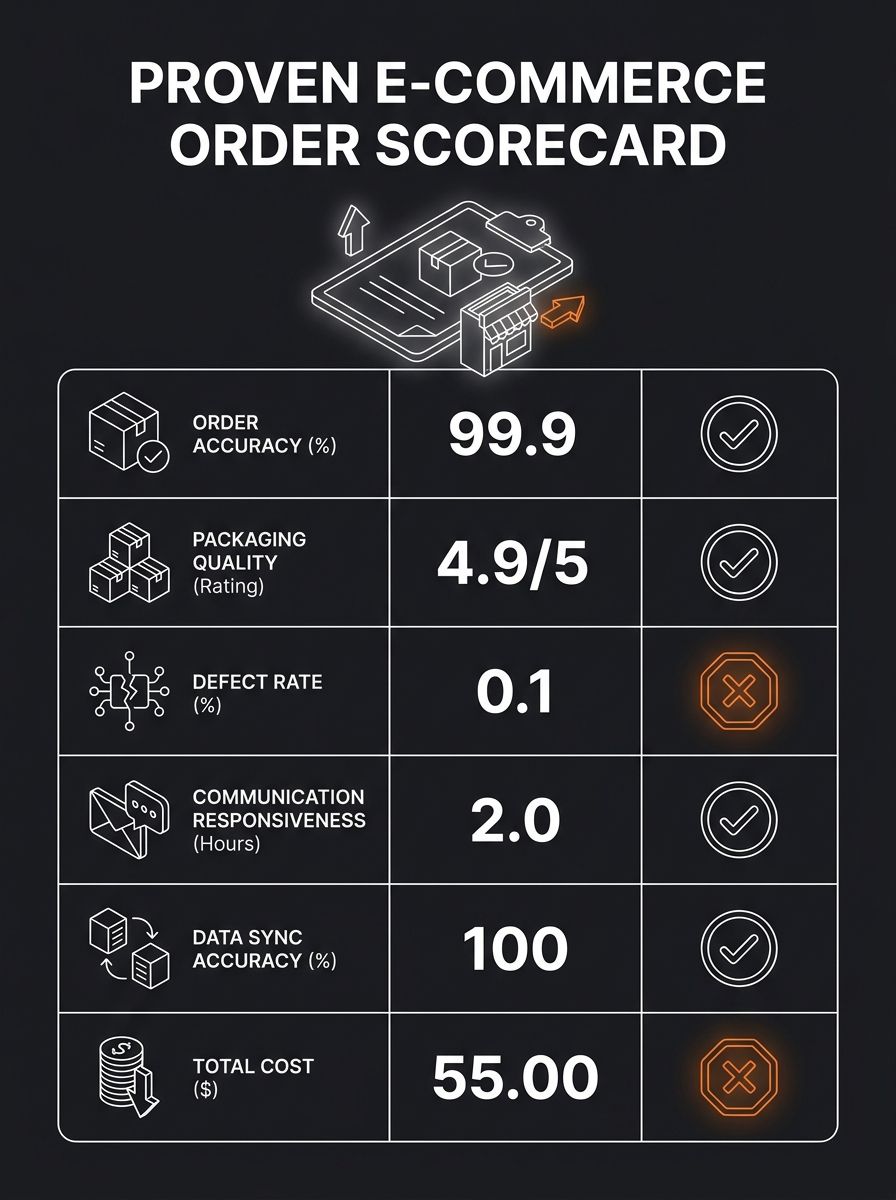

Packaging is where supplier vetting checklists get lazy. They'll ask "was the item damaged?" and leave it at that. But packaging affects your refund rate, your review score, and your repeat purchase rate in ways that don't show up for 30-60 days after launch.

When your test order arrives, document the following:

Outer box condition: Was there crushing, water damage, or tape failure? Photograph all six sides.

Inner packing material: Bubble wrap, air pillows, foam inserts, or bare cardboard? A $22 ceramic mug shipped in a poly bag with no padding is a 15-20% breakage rate waiting to happen.

Branding and inserts: Did the supplier include their own marketing materials, invoices showing their wholesale price, or third-party flyers? Any of these in a customer-facing package destroys the perception that you're a real brand.

Smell and residue: Products stored in damp warehouses or next to chemicals carry odors. Jewelry and textiles are especially vulnerable.

If you're running a store where average order value sits above $30, packaging quality directly affects whether that customer orders again. That repeat purchase math matters more than saving $0.40 per unit on a supplier who ships in flimsy poly mailers. Understanding how your real margins break down makes this tradeoff obvious.

Defect Rate on Small-Batch Orders

Industry procurement standards typically flag anything above 1,000 defects per million opportunities (DPPM) as unacceptable. In dropshipping, you don't have the volume for DPPM math, so you need a different approach.

Order five units of the same product. Inspect each for:

Cosmetic defects (scratches, discoloration, loose threads, misaligned prints)

Functional defects (zippers that stick, electronics that don't power on, clasps that don't hold)

Dimensional accuracy (does the "large" actually match the size chart within 1cm tolerance?)

One defect out of five is a 20% defect rate. That's catastrophic. Two percent is the ceiling you should tolerate before launch, which means on a five-unit test, you need five clean units. Even one defective item on a five-unit sample should trigger a second round of testing or a switch to a different supplier entirely.

This is the part of dropshipping due diligence that most operators skip because it requires buying multiple test units instead of one. The cost of five extra test orders at $8-15 each ($40-75 total) is trivial compared to a 12% return rate eating your ad spend for three weeks before you identify the problem.

Communication Responsiveness Under Pressure

Speed of email replies during the sales phase tells you nothing. Every supplier responds fast when they're trying to win your business. The real test is how they respond when something goes wrong.

During your test order process, intentionally create a minor disruption. Send a message requesting a shipping address change after the order is placed. Or ask for a tracking number update at an unusual hour. Record two things: response time (in hours, not days) and whether the response actually solves the problem or just acknowledges it.

Here's what the data should look like:

Under 12 hours with a solution: Strong signal. This supplier has staff monitoring messages across time zones or has clear SOPs for common requests.

12-24 hours with a solution: Acceptable for non-US-based suppliers. Expect this from most CJ and AliExpress partners.

Over 24 hours or acknowledgment without resolution: Red flag. If they can't handle one address change on one order, imagine 15 customer service tickets hitting them simultaneously during a winning ad campaign.

As part of building your own supplier reliability scorecard, communication responsiveness should carry at least 20% of the total weight. A supplier who ships in three days but takes 48 hours to respond to a problem is worse than one who ships in five days and resolves issues in four hours.

Backend Data Sync and Inventory Accuracy

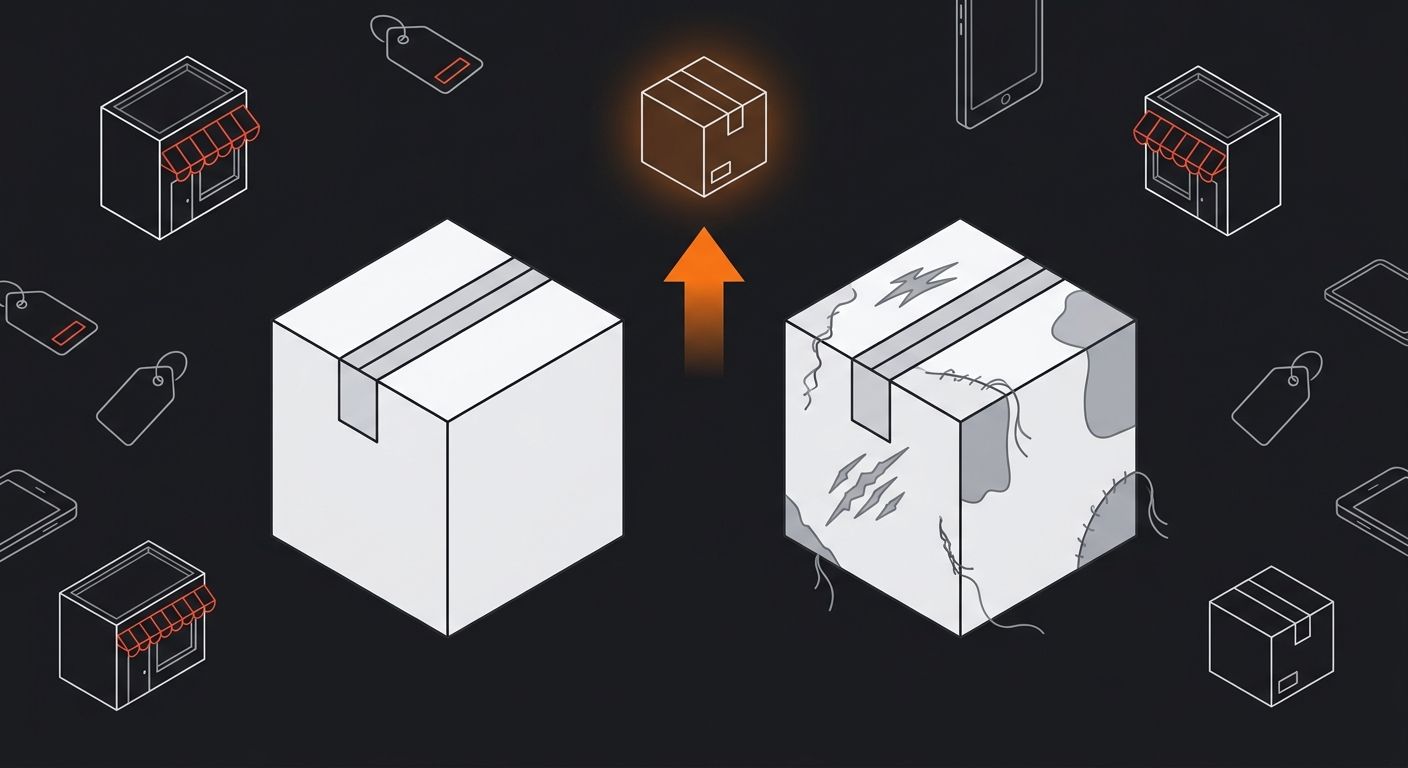

This layer is invisible to most operators until it causes overselling. You place a test order through your Shopify or WooCommerce store, and you need to verify that the supplier's system accurately reflects what happened.

Check these data points after your test order completes:

Tracking number accuracy: Does the tracking number provided actually correspond to your package in the carrier's system? Cross-reference it manually on 17track or the carrier's site.

Inventory status post-order: If the supplier claims real-time inventory updates, check whether their stock count decreased after your order. Inventory availability claims are notoriously unreliable across platforms, and a test order is your chance to verify the claim against reality.

Order status timestamps: Compare the timestamp of "shipped" status in the supplier's system against the carrier's first scan. Some suppliers mark orders as shipped when they print the label, not when the package actually enters the carrier network. A two-to-three day gap here means your advertised shipping times are already wrong.

According to Inventory Source's supplier evaluation checklist, you should evaluate whether a supplier's system automatically updates stock availability and pricing, since delays in these updates cause overselling and pricing inconsistencies. Your test order is the only way to validate this claim with real data instead of trusting a sales pitch.

Total Cost of the Test Itself

A proper supplier quality assessment through test orders costs money. Knowing exactly how much keeps you from either overspending on tests or underspending and learning nothing.

Budget for a single-supplier evaluation:

5 test units at $8-15 each: $40-75

Shipping to your location (not a customer): $0-20 depending on supplier and warehouse location

Your time inspecting, photographing, documenting (2-3 hours at whatever you value your time): $50-150

Return shipping if testing return process: $6-15

Total per supplier: $96-260. If you're evaluating three suppliers for the same product, you're looking at $300-780 before you've sold anything.

That sounds expensive until you compare it to the alternative. Launching with an untested supplier and running $100/day in Facebook ads against a product with a 15% defect rate burns through $1,500 in ad spend in two weeks while generating returns that eat another $300-500 in direct costs. The test order investment pays for itself if it prevents even one bad supplier from reaching your live store.

And if you're reverse-engineering a winning store's product selection, remember that the store you're studying has already absorbed these testing costs. Their margins reflect a supplier relationship that's been validated. Yours hasn't yet.

Where This Playbook Falls Short

This test order validation model has real limitations, and pretending otherwise would be dishonest.

Sample size is inherently small. Five test orders don't capture seasonal variation, warehouse staffing changes, or the quality drift that happens when a supplier switches raw material vendors mid-run. A supplier who passes your test in March might decline by June. The playbook catches gross incompetence, not gradual erosion.

Test orders get special treatment. Some suppliers flag low-quantity orders from new accounts and give them priority handling. Your test experience may be better than what your customers receive at scale. The only mitigation is placing a second round of unannounced test orders 30-60 days after launch, with no advance communication to the supplier.

You can't test fulfillment speed under load. Your single test order moves through an empty queue. When Black Friday hits and that supplier is processing 10,000 orders across dozens of stores, your five-day average might become twelve days. No amount of pre-launch supplier testing simulates peak-season pressure.

Communication quality varies by contact. You might get an excellent rep during testing and a different, less responsive person once you're a live account. Document the name of every person you interact with and specifically request continuity when you scale.

The playbook works best as a filter to eliminate clearly bad suppliers before launch, not as a guarantee that the ones who pass will perform forever. Treat it as the first checkpoint in an ongoing evaluation cycle, with monthly spot-checks and quarterly reviews baked into your operations calendar. The suppliers who survive that ongoing scrutiny are the ones worth building a business around.

365 Dropship Editorial

Editorial team writing about E-commerce, dropshipping, and product discovery — reviews of dropshipping suppliers and platforms, trending niche guides (jewelry, beauty, pets, home, fashion), supplier due diligence, ecom operations, shipping & fulfillment strategy, product research, AOV optimization, and profitable dropshipping case studies.